Since late 2015 at Aalborg University, we have been experimenting with new technologies and enhanced methods for ethnomethodological conversation analysis and video ethnography, supported by our respective departments, the Video Research Lab (VILA), and the Digital Humanities Lab 1.0 national infrastructure project.

Nowadays, there is no shortage of new recording technology and mobile gadgets to try out – just take a look at Stuff, T3 or Gadget or the more specialised, professional magazines like HDVideoPro or Pro Moviemaker! There are many avenues and dead-ends we have explored, as well as methodological gains and problems that we have encountered. We'd like to sketch out some of these here.

who are we?

- Professor Paul McIlvenny, Department of Culture and Global Studies, AAU

- Associate Professor Jacob Davidsen, Department of Communication and Psychology, AAU

Motivations

One motivation for this enquiry is our worry that in our field, ethnomethodological conversation analysis (EMCA), very few papers discuss data collection in itself or discuss methodologically how recordings were collected for a particular study in terms of how (socio)technical practices shaped their choice of data. The general assumption is that once the ‘Tape’ is made, and it is often made fairly automatically and locked into a ‘block box’ functionality (eg. auto-gain, auto-focus, auto-white balance), it is an unquestionable and adequate record for an EMCA analysis. There is often a simple faith that as little interference with, and understanding of, the operation of the technology as possible is more conducive to collecting better recordings, despite the algorithmic normativity of default functions. Ashmore & Reed (2000) have indicated how naïve such a realist view can be, especially when considering the relationship between ‘the Tape’ and ‘the Transcript’. And scholars in film studies, such as Richard Dyer (1997), have shown how dangerous it can be in relation to the racial politics of film and image technologies.

History

Intriguingly, in 1987, the journal Research on Language and Social Interaction published a paper by Wendy Leeds-Hurwitz that sifted through the unpublished records of some of the early experiments with audio and film recordings as ‘data’ for microanalysis by a multidisciplinary research group started at Stanford University in 1955-56. Publications and records of these experiments and proto-‘data sessions’ (“soaking”) are not easily accessible, eg. the 16-mm family therapy ‘Doris’ film with synced sound that Gregory Bateson brought in 1956 to the group. (It is a crying shame that in this digital age there is not a free, open-access, online archive of core and marginal publications, unpublished documents and recordings from the 1950s and 60s – Sacks’ transcribed lectures being a prime exception.) This multidisciplinary group, which included Ray Birdwhistell, focused on the ‘natural history of an interview’ over a number of years (according to Frederick Erickson, Erving Goffman met with Bateson and other members of the group in the late 1950s). In hindsight, Erickson (2004) suggests that the differing affordances of film (for example, for this Palo Alto group) versus video (and cassette audio) for later scholars may have had an impact on the routine seeing/looking and listening/hearing practices of scholars in each period, a difference that may have privileged sequentiality over simultaneity. We could ask are we at a similar juncture today?

There were a few papers published in the past that brought readers up-to-speed on new technological developments and best practices, and though they are now anachronistic, they were important pedagogically, eg. Goodwin (1993) and Modaff & Modaff (2000). However, the impact of digitalisation is so pervasive now that their specific analogue concerns are mostly irrelevant. From our experiments and reflections, we contend that today there are a set of paradigm shifts. With the complexity of the recording scenarios, and the increasing use of computational tools and resources, we position ourselves in what we call BIG VIDEO. We use this rather facile term to counter the hype about quantitative big data analytics. Basically, we are hoping to develop an enhanced infrastructure for analysis with innovation in four key areas: 1) capture, storage, archiving and access of digital video; 2) visualisation, transformation and presentation; 3) collaboration and sharing; and 4) qualitative tools to support analysis. BIG VIDEO involves dealing with complex human data capture scenarios, large databases and distributed storage, computationally intensive virtualisation and visualisation of concurrent data streams, distributed collaboration and necessarily a new big video data ethics – and, of course, we are only interested in these matters because analysis comes first (or, as they say in the 3D and VR industry, it is always the story that comes first).

Practical methods

So what have we been doing? One key focus has been to collect richer video and sound recordings in a variety of settings. And this means developing a sense of good camerawork/micwork (with both existing and new technologies) in order to collect analytically adequate recordings. We have used swarm public video (see McIlvenny forthcoming), 4k video cameras, network cameras, 360° cameras, S3D cameras, spatial audio, multichannel video and audio, GPS and heart rate tracking, and multichannel subtitling and video annotation, and we are beginning to work with local positioning systems (LPS) and beacons, as well as biosensing data, to see what is relevant to our EMCA concerns.

In Peter Wintonick’s illuminating documentary Cinéma vérité – defining the moment (1999), filmmaker Robert Koenig says, “the camera… has to be open to the moments that reveal the things you cannot otherwise see”. While this is still the case with new recording technologies, we are no longer constrained to the angle, frame and perspective of our camera for revealing phenomena for analysis. What if people, actions, events or objects outside the scope of our camera frame influenced the primary activity or if the participants would suddenly move out of focus? Most of us have tried watching a recording in which some important element of the interaction is taking place on the borders of the camera’s frame or focus. This can be very frustrating! With 360° cameras this is almost eliminated, and much can be done in post-editing, but bear in mind that a 360° camera also has a point of viewing. Thus, new technologies also require careful methodological and practical attention.

Since January 2016, we have collected video recordings in a variety of settings, including, in chronological order, dog sledding, architecture student group work, disability mobility scootering, live interactive 360° music streaming to a acquired brain injury centre, mountain bike off-road racing, guided nature tours on foot and mountain bike, home hairdressing (hair extensions), a family cycle camping holiday and just lately, Pokemon Go hunting. Taking two of these as case studies, we will elaborate a little on what we did. Unfortunately, given the sensitivity of the recordings, we cannot illustrate with examples on a public website.

360 degrees

In order to collect some mobility data in new ways, especially in relation to sound, it was desirable to move away from a reliance on the GoPro cameras that many of us now use to collect recordings. GoPros, and other action cameras, are fine for reliable wide-angle video recording in auto-mode, but the in-built audio quality is poor, especially when the camera is encased in its waterproof housing and mounted on a vibrating vehicle or a human body. Also, there are certain constraints when trying to document such a sustained activity as two families on a cycle camping holiday. It is necessary to be able to carry all the equipment along for the ride, so it must be portable, robust and weatherproof. A dual 360° camera rig, as well as a binaural microphone and an ambisonic microphone, were used to record high quality 8k 360° footage and high quality directional sound at 24 bit/96 kHz resolution.

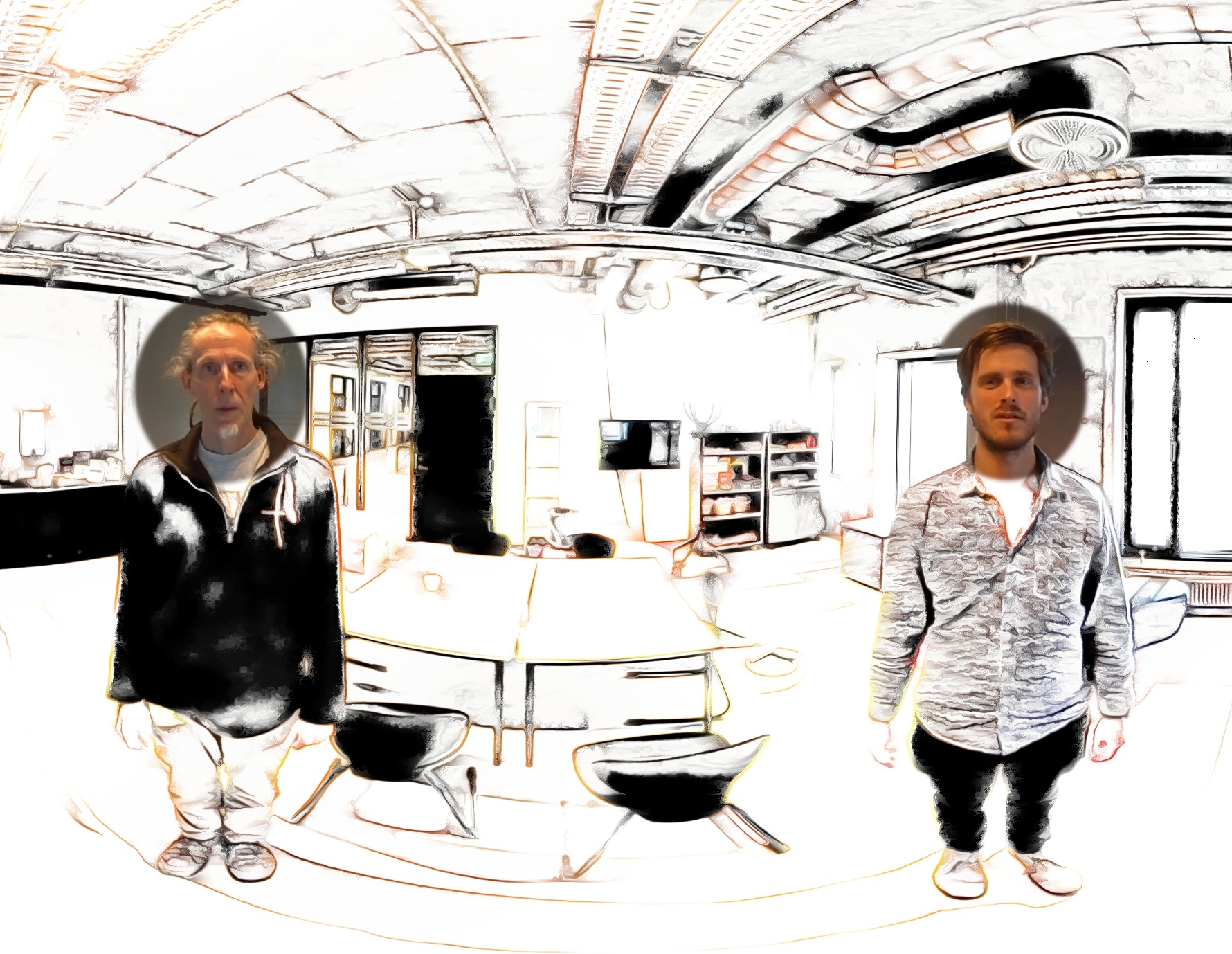

After much trial and error involving stitching videos, repositioning the sound field, and rendering the right formats/codecs, a video clip was made that combined the 360° video and ambisonic audio streams into a format that could be played on Google’s android Jump app in a VR headset – probably the first research data of its kind in the world. The result is hard to describe, and it is highly artefactual, but it presents a complete 360° audio-visual record of the event – preparing and eating dinner outdoors at the campsite – that gives the impressive illusion that one is present at the scene (POV) and one can actively view, hear and track the participants as they move around. And the point? Well, it is a very different experience than watching an aspatial, framed 2D video with poor quality stereo or mono sound, which is unreliably treated as any members’ perspective. The mobility of the participants in the setting can be tracked, even when they make unexpected and unforeseen movements and action trajectories. A sound or voice can be precisely located making it easier to identify who says what. There is no frame as such, so events and actions ‘off-frame’ or ‘backstage’ are usually available for inspection. In this capacity, it is in some way comparable to being back in 1895 at the gates of the Lumiére factory when the Lumiére brothers first recorded moving images of workers leaving at the end of the day, who probably did not have much awareness of the continuous gaze of the new camera.

Pokemon Go

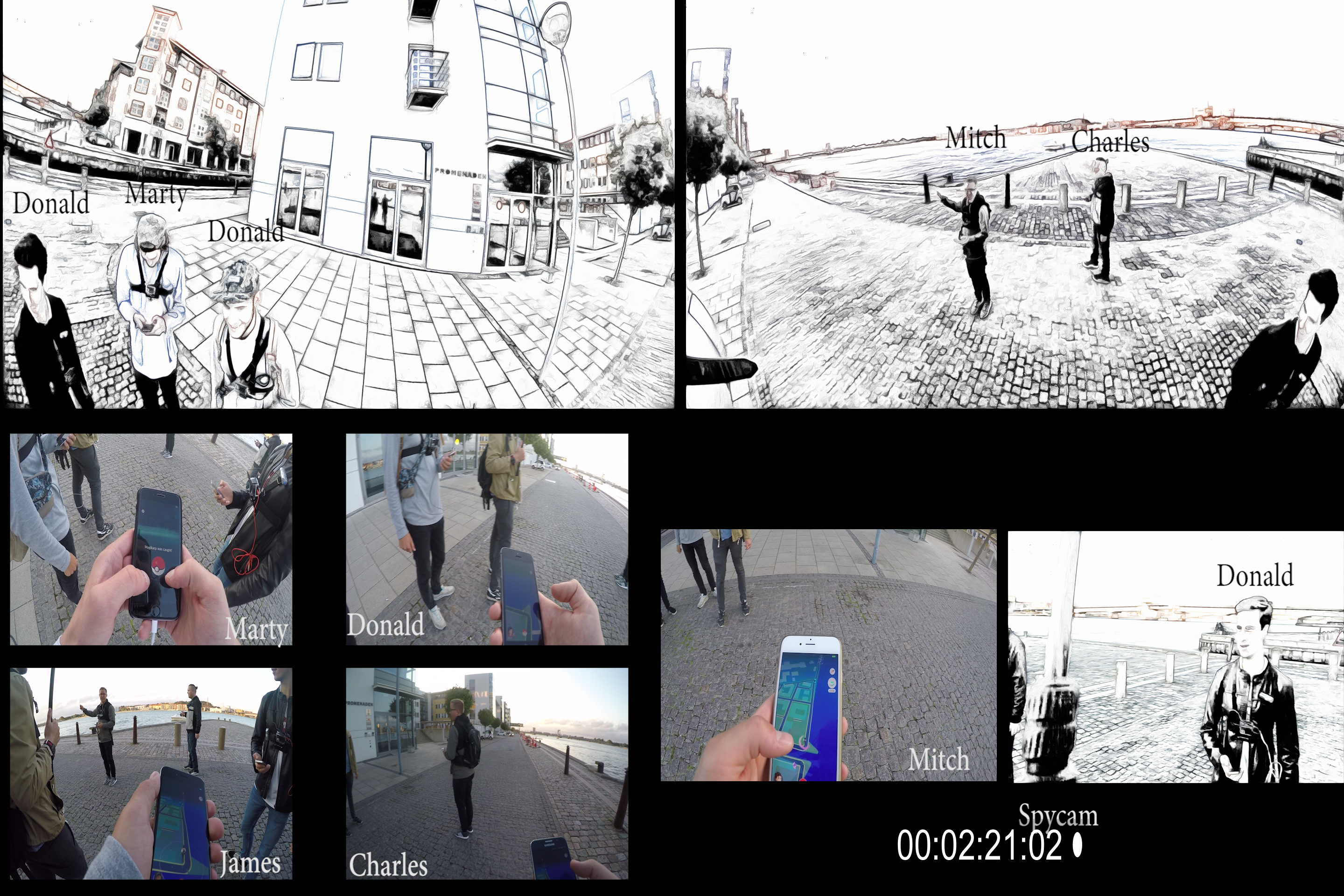

In the summer of 2016, streets, parks and other public spaces that were previously unnoticed for many people turned into inhabited places for Pokemon Go players around the globe. In Aalborg (Denmark), many people gather in parks and close to a previously forgotten part of the harbour area near the railway bridge. At any time of day or night, players congregate at these locations in small groups. Sometimes many people gather as one big group to hunt a rare and desirable ‘Dragonite’ or ‘Pichachu’. From our perspective, the game and the behaviour of the players serve as an interesting case study for EMCA, but how can we record what each individual is doing with their smartphone and still capture how the whole group is being mobile together? In this case, five players wore GoPros on their body pointing at their individual smartphones as well as lavier microphones. In addition, one of us (wearing spy glasses) walked around the city with a 360° camera mounted on a long pole. With this setup, we captured high quality video and audio from the individuals and a 360° recording of the surroundings and the mobility of the group. Some persons walking by asked what was on the pole and tried to hide when we told them it was a camera! It is getting easier to capture Big Video, but the post-editing is getting more complex, richer and more fun. Questions such as how can we transcribe 360° data or what microphone to privilege in the post-editing become crucial to address.

With the Pokemon Go data, we experienced that the composite stereo image of all the audio sources presented too much information. For instance, the group of five persons in a ‘mobile with’ split into two subgroups at one point, and to make this analysable, multiple audio channels are needed. Thus, we cannot rely on one microphone to capture such complex data – each microphone gives a highly selective record of the interaction unfolding (as does the video camera). In a recent data session, the general reaction was something like “I don’t know what to make of it” or “it is to complex, I don’t know what to look at”. But when we started moving around in the 360° recording with embedded 2D recordings from the GoPros and 2D transcripts – some of the participants started to notice “what one otherwise cannot see”, namely how other people oriented to the Pokemon Go players on the hunt as they walked by. For EMCA researchers the opportunities of 360° are thought provoking on methodological, theoretical and practical levels, eg. what to transcribe, what to select for presentations and what to make of the context.

Advantages

We have ascertained that there are distinct advantages to using some of these new technologies. First, we can capture the situated relevancies of more extreme and complex multi-party practices. Second, there are new phenomena to study that were unavailable to enquiry or unimaginable before. Third, there are new modes of presentation and visualisation. Lastly, we have found it necessary to re-examine and rethink what ‘data’ is. There are also dangers and disadvantages, including the idiosyncrasies of human perception, the illusion of presence and the problem of sensor and computational artefacts, as well as the question of the reliance on a surveillance architecture, and other ethical problems. Methodologically, we are grappling with issues of perspectivation, incommensurability and epistemic adequacy.

Futures

For the future, we speculate that some emerging technologies have potential. For example, virtual reality and augmented/mixed reality are being hyped by the computer and gaming industry at present. They boast of its capability to enhance ‘immersion’. These technologies could be used to visualise, navigate, annotate and share data in new ways, what we call inhabited data. In addition, they provide the means for an investigation of the taken-for-granted in a setting, in a similar vein to the sociologist Harold Garfinkels’ tutorials for students who tried to follow instructions while wearing inverting lenses. Another example is ‘light field’ technology, which promises a revolution in imaging that virtualises the camera (eg. position, depth of field, focus and frame rate). This could be used to explicitly challenge or problematize the objectivity of the ‘recording’ (or the ‘Tape’). A rediscovered conception of sound in three-dimensions – nth order ambisonics – allows computationally effective representations of a sound field. This could enhance our sense of where in space an utterance or sound comes from. And lastly, there are bio-sensing devices, developed under the banner of the ‘quantified self’ (we prefer the ‘qualifiable we’), that may bring all the senses and more subtle perceptions of embodiment into our enquiry.

If you are engaged in similar experiments, then please contact us.

An abridged and edited version of this webpage was published on the ROLSI blog.

References

Ashmore, Malcolm & Reed, Darren (2000). Innocence and Nostalgia in Conversation Analysis: The Dynamic Relations of Tape and Transcript. Forum: Qualitative Social Research 1(3).

Dyer, Richard (1997). White: Essays on Race and Culture. London: Routledge.

Erickson, Frederick (2004). Origins: A Brief Intellectual and Technological History of the Emergence of Multimodal Discourse Analysis. In LeVine, Philip & Scollon, Ron (Eds.), Discourse and Technology: Multimodal Discourse Analysis, Washington, DC: Georgetown University Press.

Leeds‐Hurwitz, Wendy (1987). The Social History of the Natural History of an Interview: A Multidisciplinary Investigation of Social Communication. Research on Language and Social Interaction 20(1-4): 1-51.

Goodwin, Charles (1993). Recording Interaction in Natural Settings. Pragmatics 3(2): 181-209.

Modaff, John V. & Modaff, Daniel P. (2000). Technical Notes on Audio Recording. Research on Language & Social Interaction 33(1): 101-118.

McIlvenny, Paul (2017). “Mobilising the Micro-Political Voice: Doing the ‘Human Microphone’ and the ‘Mic-Check’.” Journal of Language and Politics 16(1): 110-136.